Pytorch框架实战——102类花卉分类

本篇博文为【唐宇迪】计算机视觉实训营第二天-Pytorch框架实战课程的个人笔记。

代码来自:qiuzitao深度学习之PyTorch实战(十),与视频教学流程记录一致,课程详情可参考该篇。

下文数据集及对应json文件:

链接:https://pan.baidu.com/s/14MO6dP_Zax-DlUFfs-NLww 提取码:j11h

数据可视化

import matplotlib.pyplot as plt import numpy as np import torch from torchvision import transforms, models, datasets import os import json #数据集位置 data_dir = './flower_data/' #数据增强操作 data_transforms = { 'train': transforms.Compose([transforms.RandomRotation(45),#随机旋转,-45到45度之间随机选 transforms.CenterCrop(224),#从中心开始裁剪,留下224*224的。(随机裁剪得到的数据更多) transforms.RandomHorizontalFlip(p=0.5),#随机水平翻转 选择一个概率去翻转,0.5就是50%翻转,50%不翻转 transforms.RandomVerticalFlip(p=0.5),#随机垂直翻转 transforms.ColorJitter(brightness=0.2, contrast=0.1, saturation=0.1, hue=0.1),#参数1为亮度,参数2为对比度,参数3为饱和度,参数4为色相 transforms.RandomGrayscale(p=0.025),#概率转换成灰度率,3通道就是R=G=B transforms.ToTensor(), #转成tensor的格式 transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])#均值,标准差(拿人家算好的) ]), 'valid': transforms.Compose([transforms.Resize(256), transforms.CenterCrop(224), transforms.ToTensor(), transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]) #要和训练集保持一致的标准化操作 ]), } batch_size = 16 #制作数据(传入数据集,并作数据增强操作) image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x), data_transforms[x]) for x in ['train', 'valid']} #将数据集按batch制作成一个数据包 dataloaders = {x: torch.utils.data.DataLoader(image_datasets[x], batch_size=batch_size, shuffle=True) for x in ['train', 'valid']} class_names = image_datasets['train'].classes def im_convert(tensor): """ 展示数据""" image = tensor.to("cpu").clone().detach() image = image.numpy().squeeze() image = image.transpose(1, 2, 0) image = image * np.array((0.229, 0.224, 0.225)) + np.array((0.485, 0.456, 0.406)) # 还原回去 image = image.clip(0, 1) return image # 生成画布 fig=plt.figure(figsize=(20, 12)) # 这里batch=16所以用4x4的布局 columns = 4 rows = 4 dataiter = iter(dataloaders['valid']) inputs, classes = dataiter.next() print('classes',classes) # 数据集标签名的索引(可有可无,个人觉得按照文件夹名来看反而容易查看是否识别正确,如果需要做项目任务,可自行补充上) with open('cat_to_name.json', 'r') as f: cat_to_name = json.load(f) #print('cat_to_name',cat_to_name) for idx in range(columns*rows): ax = fig.add_subplot(rows, columns, idx+1, xticks=[], yticks=[]) # ax.set_title(cat_to_name[str(int(class_names[classes[idx]]))]) ax.set_title("{} ({})".format(str(int(class_names[classes[idx]])), cat_to_name[str(int(class_names[classes[idx]]))])) # ax.set_title(str(int(class_names[classes[idx]]))) plt.imshow(im_convert(inputs[idx])) plt.show()

12345678910111213141516171819202122232425262728293031323334353637383940414243444546474849505152535455565758596061626364656667输出:(前面是文件夹名,后面是索引到的花的名字,可以查看下是否正确对应所属文件夹,因为我在做的过程中,test测试时显示的类名与所属文件夹对不上,这是文件索引问题,后面的代码稍微改了改索引)

train

下面代码将不进行可视化,直接就是训练的代码。

import os import torch from torch import nn import torch.optim as optim from torchvision import transforms, models, datasets # https://pytorch.org/docs/stable/torchvision/index.html #模块的官方网址,上面例子有教你怎么用 import time import copy data_dir = './flower_data/' data_transforms = { 'train': transforms.Compose([transforms.RandomRotation(45),#随机旋转,-45到45度之间随机选 transforms.CenterCrop(224),#从中心开始裁剪,留下224*224的。(随机裁剪得到的数据更多) transforms.RandomHorizontalFlip(p=0.5),#随机水平翻转 选择一个概率去翻转,0.5就是50%翻转,50%不翻转 transforms.RandomVerticalFlip(p=0.5),#随机垂直翻转 transforms.ColorJitter(brightness=0.2, contrast=0.1, saturation=0.1, hue=0.1),#参数1为亮度,参数2为对比度,参数3为饱和度,参数4为色相 transforms.RandomGrayscale(p=0.025),#概率转换成灰度率,3通道就是R=G=B transforms.ToTensor(), #转成tensor的格式 transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])#均值,标准差(拿人家算好的) ]), 'valid': transforms.Compose([transforms.Resize(256), transforms.CenterCrop(224), transforms.ToTensor(), transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]) #要和训练集保持一致的标准化操作 ]), } # 自定义batch大小 batch_size = 64 #制作数据(传入数据集,并作数据增强操作) image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x), data_transforms[x]) for x in ['train', 'valid']} #将数据集按batch制作成一个数据包 dataloaders = {x: torch.utils.data.DataLoader(image_datasets[x], batch_size=batch_size, shuffle=True) for x in ['train', 'valid']} class_names = image_datasets['train'].classes # print('image_datasets',image_datasets) # print('dataloaders',dataloaders) # print('dataset_sizes',dataset_sizes) # print('class_names',class_names) model_name = 'resnet' #可选的比较多 ['resnet', 'alexnet', 'vgg', 'squeezenet', 'densenet', 'inception'] #是否用人家训练好的特征来做 feature_extract = True # 是否用GPU训练 train_on_gpu = torch.cuda.is_available() if not train_on_gpu: print('CUDA is not available. Training on CPU ...') else: print('CUDA is available! Training on GPU ...') device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu") # 定义该层是否需要训练 # 在数据量小的情况可以采用将前面的网络层冻结,只训练后面fc层。 def set_parameter_requires_grad(model, feature_extracting): if feature_extracting: for param in model.parameters(): #如需训练改为True param.requires_grad = False def initialize_model(model_name, num_classes, feature_extract, use_pretrained=True): # 选择合适的模型,不同模型的初始化方法稍微有点区别 model_ft = None input_size = 0 if model_name == "resnet": """ Resnet152 """ model_ft = models.resnet152(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) num_ftrs = model_ft.fc.in_features model_ft.fc = nn.Sequential(nn.Linear(num_ftrs, 102), nn.LogSoftmax(dim=1)) input_size = 224 elif model_name == "alexnet": """ Alexnet """ model_ft = models.alexnet(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) num_ftrs = model_ft.classifier[6].in_features model_ft.classifier[6] = nn.Linear(num_ftrs,num_classes) input_size = 224 elif model_name == "vgg": """ VGG11_bn """ model_ft = models.vgg16(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) num_ftrs = model_ft.classifier[6].in_features model_ft.classifier[6] = nn.Linear(num_ftrs,num_classes) input_size = 224 elif model_name == "squeezenet": """ Squeezenet """ model_ft = models.squeezenet1_0(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) model_ft.classifier[1] = nn.Conv2d(512, num_classes, kernel_size=(1,1), stride=(1,1)) model_ft.num_classes = num_classes input_size = 224 elif model_name == "densenet": """ Densenet """ model_ft = models.densenet121(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) num_ftrs = model_ft.classifier.in_features model_ft.classifier = nn.Linear(num_ftrs, num_classes) input_size = 224 elif model_name == "inception": """ Inception v3 Be careful, expects (299,299) sized images and has auxiliary output """ model_ft = models.inception_v3(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) # Handle the auxilary net num_ftrs = model_ft.AuxLogits.fc.in_features model_ft.AuxLogits.fc = nn.Linear(num_ftrs, num_classes) # Handle the primary net num_ftrs = model_ft.fc.in_features model_ft.fc = nn.Linear(num_ftrs,num_classes) input_size = 299 else: print("Invalid model name, exiting...") exit() return model_ft, input_size #改成我们要训练的102类 model_ft, input_size = initialize_model(model_name, 102, feature_extract, use_pretrained=True) #GPU计算 model_ft = model_ft.to(device) # 模型保存的文件名(需要保存到别的路径可接着加) filename='checkpoint.pth' # 是否训练所有层 params_to_update = model_ft.parameters() print("Params to learn:") if feature_extract: params_to_update = [] for name,param in model_ft.named_parameters(): if param.requires_grad == True: params_to_update.append(param) print("t",name) else: for name,param in model_ft.named_parameters(): if param.requires_grad == True: print("t",name) # 查看模型具体框架信息 print('model_ft',model_ft) # 优化器设置 optimizer_ft = optim.Adam(params_to_update, lr=1e-2) scheduler = optim.lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)#学习率每7个epoch衰减成原来的1/10 #最后一层已经LogSoftmax()了,所以不能nn.CrossEntropyLoss()来计算了,nn.CrossEntropyLoss()相当于logSoftmax()和nn.NLLLoss()整合 criterion = nn.NLLLoss() def train_model(model, dataloaders, criterion, optimizer, num_epochs=25, is_inception=False, filename=filename): since = time.time() best_acc = 0 """ checkpoint = torch.load(filename) best_acc = checkpoint['best_acc'] model.load_state_dict(checkpoint['state_dict']) optimizer.load_state_dict(checkpoint['optimizer']) model.class_to_idx = checkpoint['mapping'] """ model.to(device) # 用GPU训练 val_acc_history = [] train_acc_history = [] train_losses = [] valid_losses = [] LRs = [optimizer.param_groups[0]['lr']] best_model_wts = copy.deepcopy(model.state_dict()) for epoch in range(num_epochs): print('Epoch {}/{}'.format(epoch, num_epochs - 1)) print('-' * 10) # 训练和验证 for phase in ['train', 'valid']: if phase == 'train': model.train() # 训练 else: model.eval() # 验证 running_loss = 0.0 running_corrects = 0 # 把数据都取个遍 for inputs, labels in dataloaders[phase]: inputs = inputs.to(device) # 将input传入GPU计算 labels = labels.to(device) # 将labels传入GPU计算 # 清零 optimizer.zero_grad() # 只有训练的时候计算和更新梯度 with torch.set_grad_enabled(phase == 'train'): if is_inception and phase == 'train': outputs, aux_outputs = model(inputs) loss1 = criterion(outputs, labels) loss2 = criterion(aux_outputs, labels) loss = loss1 + 0.4 * loss2 else: # resnet执行的是这里 outputs = model(inputs) loss = criterion(outputs, labels) _, preds = torch.max(outputs, 1) # 训练阶段更新权重 if phase == 'train': loss.backward() optimizer.step() # 计算损失 running_loss += loss.item() * inputs.size(0) running_corrects += torch.sum(preds == labels.data) epoch_loss = running_loss / len(dataloaders[phase].dataset) epoch_acc = running_corrects.double() / len(dataloaders[phase].dataset) time_elapsed = time.time() - since print('Time elapsed {:.0f}m {:.0f}s'.format(time_elapsed // 60, time_elapsed % 60)) print('{} Loss: {:.4f} Acc: {:.4f}'.format(phase, epoch_loss, epoch_acc)) # 得到最好那次的模型 if phase == 'valid' and epoch_acc > best_acc: best_acc = epoch_acc best_model_wts = copy.deepcopy(model.state_dict()) state = { 'state_dict': model.state_dict(), 'best_acc': best_acc, 'optimizer': optimizer.state_dict(), } torch.save(state, filename) if phase == 'valid': val_acc_history.append(epoch_acc) valid_losses.append(epoch_loss) scheduler.step(epoch_loss) if phase == 'train': train_acc_history.append(epoch_acc) train_losses.append(epoch_loss) print('Optimizer learning rate : {:.7f}'.format(optimizer.param_groups[0]['lr'])) LRs.append(optimizer.param_groups[0]['lr']) print() time_elapsed = time.time() - since print('Training complete in {:.0f}m {:.0f}s'.format(time_elapsed // 60, time_elapsed % 60)) print('Best val Acc: {:4f}'.format(best_acc)) # 训练完后用最好的一次当做模型最终的结果 model.load_state_dict(best_model_wts) return model, val_acc_history, train_acc_history, valid_losses, train_losses, LRs model_ft,val_acc_history, train_acc_history, valid_losses, train_losses, LRs = train_model(model_ft, dataloaders, criterion, optimizer_ft, num_epochs=20, is_inception=(model_name=="inception"))

123456789101112131415161718192021222324252627282930313233343536373839404142434445464748495051525354555657585960616263646566676869707172737475767778798081828384858687888990919293949596979899100101102103104105106107108109110111112113114115116117118119120121122123124125126127128129130131132133134135136137138139140141142143144145146147148149150151152153154155156157158159160161162163164165166167168169170171172173174175176177178179180181182183184185186187188189190191192193194195196197198199200201202203204205206207208209210211212213214215216217218219220221222223224225226227228229230231232233234235236237238239240241242243244245246247248249250251252253254255256257258259260261262263264265266267268269270271272273训练完生成模型权重文件:

test

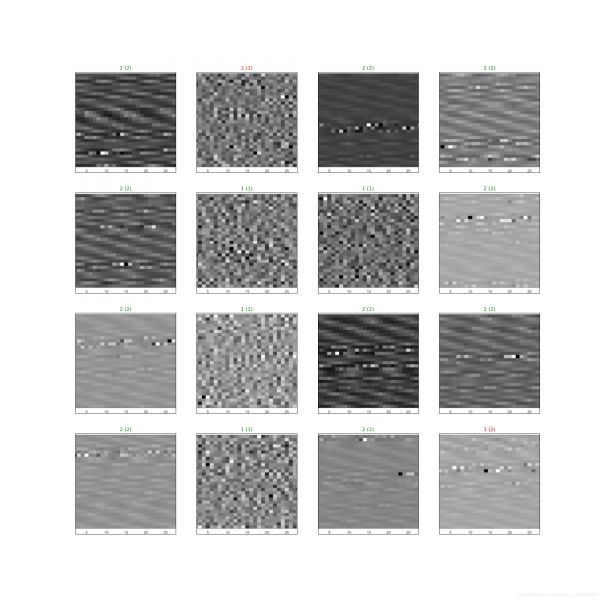

利用上面训好的模型进行测试:

import os import matplotlib.pyplot as plt import numpy as np import torch from torch import nn import torch.optim as optim import torchvision # pip install torchvision #如果你的电脑没有安装torchvision模块就得去用这个指令安装 from torchvision import transforms, models, datasets # https://pytorch.org/docs/stable/torchvision/index.html #模块的官方网址,上面例子有教你怎么用 import imageio import time import warnings import random import sys import copy import json from PIL import Image data_dir = './flower_data/' data_transforms = { 'train': transforms.Compose([transforms.RandomRotation(45),#随机旋转,-45到45度之间随机选 transforms.CenterCrop(224),#从中心开始裁剪,留下224*224的。(随机裁剪得到的数据更多) transforms.RandomHorizontalFlip(p=0.5),#随机水平翻转 选择一个概率去翻转,0.5就是50%翻转,50%不翻转 transforms.RandomVerticalFlip(p=0.5),#随机垂直翻转 transforms.ColorJitter(brightness=0.2, contrast=0.1, saturation=0.1, hue=0.1),#参数1为亮度,参数2为对比度,参数3为饱和度,参数4为色相 transforms.RandomGrayscale(p=0.025),#概率转换成灰度率,3通道就是R=G=B transforms.ToTensor(), #转成tensor的格式 transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])#均值,标准差(拿人家算好的) ]), 'valid': transforms.Compose([transforms.Resize(256), transforms.CenterCrop(224), transforms.ToTensor(), transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]) #要和训练集保持一致的标准化操作 ]), } batch_size = 16 image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x), data_transforms[x]) for x in ['train', 'valid']} dataloaders = {x: torch.utils.data.DataLoader(image_datasets[x], batch_size=batch_size, shuffle=True) for x in ['train', 'valid']} class_names = image_datasets['train'].classes model_name = 'resnet' #可选的比较多 ['resnet', 'alexnet', 'vgg', 'squeezenet', 'densenet', 'inception'] #是否用人家训练好的特征来做 feature_extract = True with open('cat_to_name.json', 'r') as f: cat_to_name = json.load(f) # print('cat_to_name',cat_to_name) # 是否用GPU train_on_gpu = torch.cuda.is_available() if not train_on_gpu: print('CUDA is not available. Training on CPU ...') else: print('CUDA is available! Training on GPU ...') device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu") def set_parameter_requires_grad(model, feature_extracting): if feature_extracting: for param in model.parameters(): param.requires_grad = False def initialize_model(model_name, num_classes, feature_extract, use_pretrained=True): # 选择合适的模型,不同模型的初始化方法稍微有点区别 model_ft = None input_size = 0 if model_name == "resnet": """ Resnet152 """ model_ft = models.resnet152(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) num_ftrs = model_ft.fc.in_features model_ft.fc = nn.Sequential(nn.Linear(num_ftrs, 102), nn.LogSoftmax(dim=1)) input_size = 224 elif model_name == "alexnet": """ Alexnet """ model_ft = models.alexnet(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) num_ftrs = model_ft.classifier[6].in_features model_ft.classifier[6] = nn.Linear(num_ftrs,num_classes) input_size = 224 elif model_name == "vgg": """ VGG11_bn """ model_ft = models.vgg16(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) num_ftrs = model_ft.classifier[6].in_features model_ft.classifier[6] = nn.Linear(num_ftrs,num_classes) input_size = 224 elif model_name == "squeezenet": """ Squeezenet """ model_ft = models.squeezenet1_0(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) model_ft.classifier[1] = nn.Conv2d(512, num_classes, kernel_size=(1,1), stride=(1,1)) model_ft.num_classes = num_classes input_size = 224 elif model_name == "densenet": """ Densenet """ model_ft = models.densenet121(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) num_ftrs = model_ft.classifier.in_features model_ft.classifier = nn.Linear(num_ftrs, num_classes) input_size = 224 elif model_name == "inception": """ Inception v3 Be careful, expects (299,299) sized images and has auxiliary output """ model_ft = models.inception_v3(pretrained=use_pretrained) set_parameter_requires_grad(model_ft, feature_extract) # Handle the auxilary net num_ftrs = model_ft.AuxLogits.fc.in_features model_ft.AuxLogits.fc = nn.Linear(num_ftrs, num_classes) # Handle the primary net num_ftrs = model_ft.fc.in_features model_ft.fc = nn.Linear(num_ftrs,num_classes) input_size = 299 else: print("Invalid model name, exiting...") exit() return model_ft, input_size model_ft, input_size = initialize_model(model_name, 102, feature_extract, use_pretrained=True) # GPU模式 model_ft = model_ft.to(device) # 加载模型文件 filename='checkpoint.pth' # 加载模型 checkpoint = torch.load(filename) best_acc = checkpoint['best_acc'] model_ft.load_state_dict(checkpoint['state_dict']) # 得到一个batch的测试数据 dataiter = iter(dataloaders['valid']) images, labels = dataiter.next() print('labels',labels) model_ft.eval() if train_on_gpu: output = model_ft(images.cuda()) else: output = model_ft(images) # print('output.shape',output.shape) #预测的最优值 _, preds_tensor = torch.max(output, 1) preds = np.squeeze(preds_tensor.numpy()) if not train_on_gpu else np.squeeze(preds_tensor.cpu().numpy()) print('preds',preds) fig=plt.figure(figsize=(20, 20)) columns =4 rows = 4 def im_convert(tensor): """ 展示数据""" image = tensor.to("cpu").clone().detach() image = image.numpy().squeeze() image = image.transpose(1, 2, 0) image = image * np.array((0.229, 0.224, 0.225)) + np.array((0.485, 0.456, 0.406)) # 还原回去 image = image.clip(0, 1) return image for idx in range (columns*rows): labels_true=str(class_names[labels[idx]]) # print('labels_true', labels_true) labels_preds=str(class_names[preds[idx]]) # print('labels_preds', labels_preds) ax = fig.add_subplot(rows, columns, idx+1, xticks=[], yticks=[]) ax.set_title("{}-{} ({}-{})".format(labels_preds,cat_to_name[labels_preds], labels_true ,cat_to_name[labels_true]), color=("green" if cat_to_name[labels_preds]==cat_to_name[labels_true] else "red")) plt.imshow(im_convert(images[idx])) plt.savefig('1.png') plt.show()

123456789101112131415161718192021222324252627282930313233343536373839404142434445464748495051525354555657585960616263646566676869707172737475767778798081828384858687888990919293949596979899100101102103104105106107108109110111112113114115116117118119120121122123124125126127128129130131132133134135136137138139140141142143144145146147148149150151152153154155156157158159160161162163164165166167168169170171172173174175176177178179180181182183184185186187188189190191192193194测试结果将存入1.png:

做别的分类任务:

相关知识

pytorch深度学习框架——实现病虫害图像分类

卷积神经网络训练花卉识别分类器

基于深度学习和迁移学习的识花实践

102类花卉分类数据集(已划分,有训练集、测试集、验证集标签)

【深度学习】 图像识别实战 102鲜花分类(flower 102)实战案例

pytorch——AlexNet——训练花分类数据集

Pytorch实现鲜花分类(102 Category Flower Dataset)

创建虚拟环境并,创建pytorch 1.3.1

基于Pytorch的花卉识别

基于YOLOv8深度学习的102种花卉智能识别系统【python源码+Pyqt5界面+数据集+训练代码】目标识别、深度学习实战

网址: Pytorch框架实战——102类花卉分类 https://m.huajiangbk.com/newsview175777.html

| 上一篇: 送花的种类与含义有哪些? |

下一篇: 鲜花导购 |